Generative AI before RAG was smart but it was only trained on static data ,thus it had few fatal flaws: it could not access the internet to check today’s news, and had never seen your company’s private files. If you ask them a question they don’t know, they are so eager to please that they will confidently invent an answer rather than admit ignorance.

Table of Contents

ToggleWhat is RAG?

RAG(Retrieval-Augmented Generation)is a technique that enhances the accuracy and reliability of generative AI models by fetching facts from an external knowledge base before generating a response. It forces the model to ground its answers in specific, retrieved evidence rather than relying solely on its internal training data. Retrieving data from a stored database in RAG is retrieval,improving our prompt depending on the fetched data is Augmentation and generating an answer from that data is called generation.

From Naive to Agentic: As RAG technology has matured, we have moved from simple search bots to complex reasoning engines.

Quick Comparison: Traditional vs. Agentic

| Feature | Traditional RAG (Naive/Advanced) | Agentic RAG (The Future) |

| Role | The Librarian: Fetches books and hands them over. | The Research Assistant: Reads, analyzes, and finds the best answer. |

| Workflow | Linear: Input ->Search -> Answer. | Cyclical: Plan ->Search ->Critique ->Retry ->Answer. |

| Failure | If the search fails, the answer fails. | If the search fails, it retries with a new strategy. |

| Use Case | Simple FAQs and document summaries. | Complex analysis and decision-making. |

The Storage Revolution

Data is fetched from different resources.To understand how RAG works properly, we must first understand where it stores its “brain.” This is where RAG differs fundamentally from the systems we are used to.

1. Traditional Databases (The Old Way)

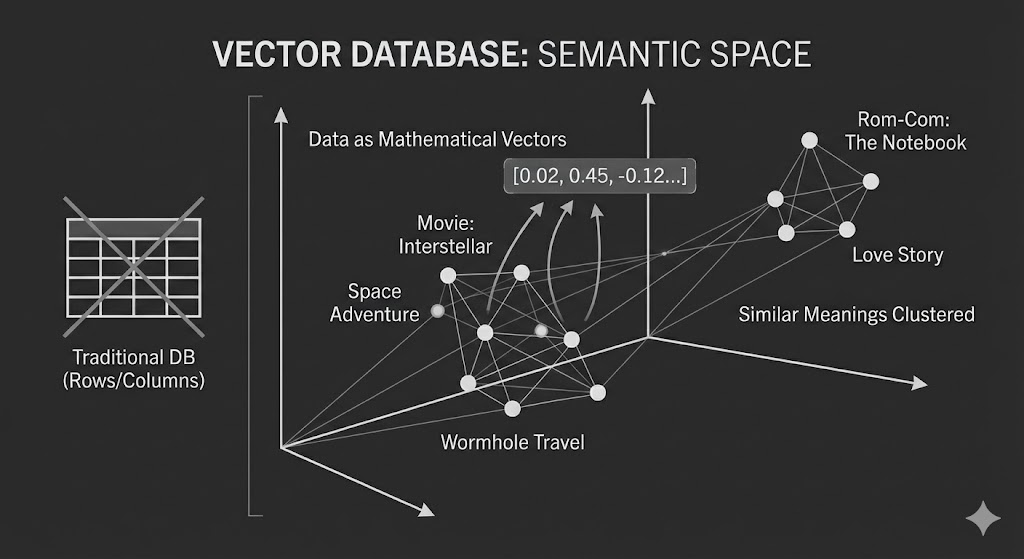

Traditional databases (SQL) store data in rows and columns. They rely on structured queries for storage and retrieval.

- How it works: You ask precise questions like, “Find the customer whose last name is Waters.”

- The Problem: These systems break down when the query gets “fuzzier.” If you ask, “Find me movies similar to Interstellar,” a traditional database struggles because it relies on exact keyword matches rather than understanding the vibe or meaning of the movie.

2. Vector Databases (The RAG Way)

This is where Vector Databases stand out. Instead of rows and columns, data is represented as mathematical vectors (multidimensional arrays of numbers).

- Capturing the Essence: These vectors capture the “essence” or semantic meaning of any data form from text and images to abstract ideas.

- Similarity Search: When it comes down to retrieval, the database doesn’t look for exact words; it calculates mathematical similarities. It knows that “space adventure” and “Interstellar” are mathematically close in the vector space, even if the words are different.

The Storage Process: How RAG “Learns”

So, how do we actually get the data into this Vector Database? We don’t just copy-paste the text. The data must undergo a transformation process called the Ingestion Pipeline. Let’s look at how a movie plot for Interstellar gets stored.

Step 1: Chunking (The Slice)

LLMs have a limit on how much text they can process at once (context window). If we want to store a whole book or a long movie script, we first need to break it down into smaller, manageable pieces called Chunks.

- Raw Data: “A team of explorers travel through a wormhole in space in an attempt to ensure humanity’s survival. The mission is led by Cooper, a former pilot.”

- The Chunk: The system might slice this into a 50-word segment focused purely on the plot summary.

Step 2: Embedding (The Translation)

This is the most critical step. We pass that text chunk through an Embedding Model (like OpenAI’s text-embedding-3 or HuggingFace models). The model doesn’t “read” the words; it analyzes the semantic meaning and translates it into a list of numbers.

- Input: “Travel through a wormhole”

- Process: The model identifies concepts like Space, Physics, Adventure, Risk.

- Output (The Vector): [-0.02, 0.45, -0.12, 0.98 …]

Note: In a real scenario, this vector might contain 1,536 dimensions (numbers) to capture every nuance of the text.

Step 3: Indexing (The Map Placement)

Finally, this vector is saved into the Vector Database (like Pinecone, Milvus, or FAISS).

- The Storage Logic: The database doesn’t just list the numbers; it plots them as coordinates in a multi-dimensional space.

- ** The Result:** Our Interstellar vector is placed in the “Sci-Fi” neighborhood, physically close to vectors for Gravity and The Martian, but far away from The Notebook.

Now that our data (the Interstellar plot) is safely stored as vectors in the database, what happens when a user actually asks a question? This is the “Runtime” process where the magic happens.

Here is the step-by-step flow of how the AI finds the answer:

Step 1: The User Query (The Input)

You ask a question in plain English.

- The user asks: “Who leads the mission in that movie about wormholes?”

Step 2: Vectorization (The Query Translation)

Before the system can search, it must speak the language of the database. The system sends your question to the same Embedding Model used in the storage step.

- The model converts your question into a vector (numbers).

- Notice you didn’t say “Interstellar,” but the vector for “movie about wormholes” is mathematically very close to the vector for “Interstellar” that we stored earlier.

Step 3: Retrieval (The Semantic Search)

The system takes your “question vector” and throws it into the Vector Database.

- The Search: It looks for the closest neighbors in that multi-dimensional space.

- The Find: It retrieves the chunk we stored earlier: “The mission is led by Cooper, a former pilot…”

- Crucially, it retrieves this before the AI tries to answer.

Step 4: Augmentation (The Context Injection)

This is the secret sauce of RAG. The system doesn’t just send your question to the AI model (like ChatGPT). It combines your question with the found data to create a Super-Prompt.

Behind the scenes, the prompt the AI actually sees looks like this:

System Instructions: You are a helpful assistant. Answer the user’s question using ONLY the context provided below.

Context (Retrieved from Database): “A team of explorers travel through a wormhole… The mission is led by Cooper, a former pilot.”

User Question: “Who leads the mission in that movie about wormholes?”

Step 5: Generation (The Final Answer)

The AI reads the “Super-Prompt.” Because the answer is right there in the context (“…led by Cooper”), the AI doesn’t need to guess or hallucinate.

- AI Response: “The mission is led by Cooper.”

Real-World Use Cases

RAG is no longer just theory; it is the engine powering modern enterprise.

- Customer Support: Companies like Shopify and Klarna use RAG to fetch real-time user data, allowing bots to say “Your package is in Texas” rather than giving generic advice.

- Legal & Compliance: Law firms use it to scan contracts against internal policy databases, flagging risks that contradict company guidelines.

- Healthcare: Institutions like Mayo Clinic use RAG to summarize patient history, citing specific medical files so doctors can verify facts instantly.

- Coding: Tech companies build “Private Copilots” that answer questions based on their proprietary codebase rather than public forums.

Ultimately, the power of RAG comes down to a simple shift in how the AI operates.

Without RAG, using Generative AI is like asking a student to take a complex test relying solely on their memory. They might get it right, or they might confidently make things up because they “think” they remember the answer.

With RAG, that same student is allowed to take an open-book exam. The system places the textbook’s proprietary data right in front of the model before it answers. It no longer needs to rely on outdated training or guesswork. By grounding every response in retrieved evidence, RAG transforms AI from a creative storyteller into a verifiable source of truth.