The Death of the “One-Shot” Prompt

In the early days of Generative AI, the hype focused on the “magic” of a single sentence. We were told that finding the perfect “spell” would manifest perfect results. For students and professionals facing real-world deadlines, this myth has created more frustration than productivity. You’ve likely experienced it: you ask for a complex project summary or a nuanced study guide, and the output is generic, uninspired, or riddled with “hallucinations.”

The reality is that high-quality AI output is not found; it is engineered. The transition from a rough “Draft” to a polished “Masterpiece” does not happen in the initial prompt; it happens in the Iterative Loop. This post explores how to stop treating AI like a search engine and start treating it like a junior analyst through systematic refinement.

The Key Role of Iterative Design: Why “Once” is Never Enough

Iterative design is the discipline of continuously refining your instructions based on the AI’s previous response. It is the difference between asking a question and holding a conversation.

- Closing the Gap of Intent: There is often a chasm between what you thought you asked and what the model mathematically interpreted. Iteration bridges this gap, aligning model output with human expectation.

- The Filter for “AI-isms”: First-draft outputs are often riddled with predictable patterns, robotic phrasing, and corporate fluff. Iteration acts as a filter, stripping away the artificial tone to reveal professional-grade content.

- The Architect’s Blueprint: Complex tasks like a 20-page market analysis or a senior thesis literature review cannot be prompted effectively in one go. Iteration allows you to build the “foundation” first, then frame the “walls,” and finally add the “decor.”

Why It Is Important: The ROI of Precision

The ability to iterate effectively is what separates casual AI users from power users.

- For Students (Authenticity and Depth): A one-shot prompt yields surface-level responses that often feel shallow or borderline plagiarized. Iterative prompting allows you to bake your own research, critical thinking, and unique voice into the final output, ensuring academic integrity.

- For Professionals (Reliability and Scale): If an AI produces a business report that is 90% accurate, the 10% error rate renders it unusable in a boardroom. Iteration pushes that accuracy toward 100%. Furthermore, a “Masterpiece” prompt becomes a scalable asset that your team can reuse repeatedly.

How It Works: The “Loop” Mechanics & The R-C-I-O Framework

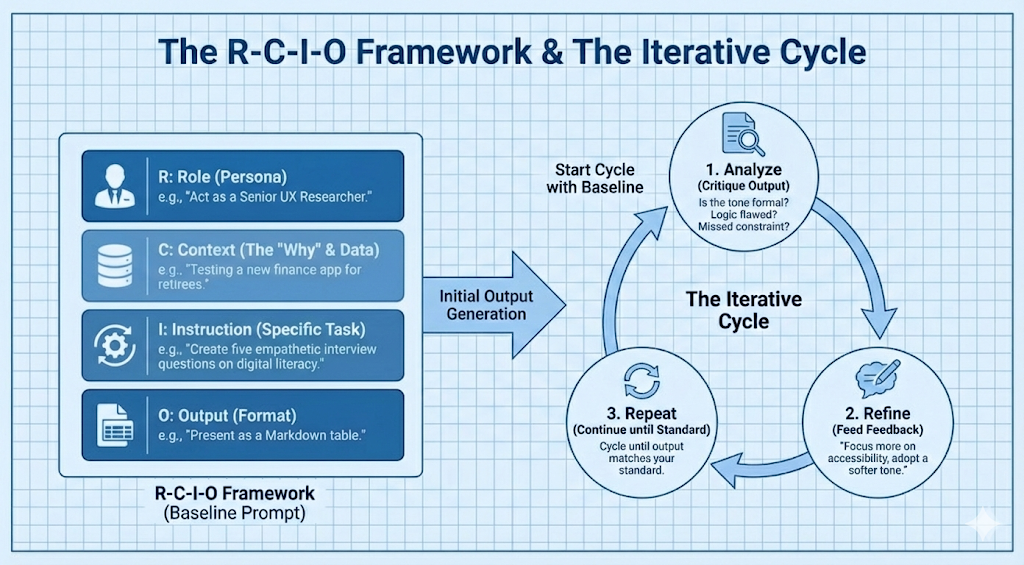

To iterate effectively, you need a strong baseline. We use the R-C-I-O Framework as our starting block to ensure the first draft is viable:

How to Use It: The Step-by-Step Protocol

To move a draft to a masterpiece systematically, follow these four stages of refinement:

- Stage 1: The Persona Launch (Level Setting)

Define expertise immediately. Don’t just say “Write a report.” Say, “Act as a Data Analyst specializing in SaaS metrics. I am going to provide you with a CSV of our Q3 churn rates.” - Stage 2: Few-Shot Prompting (The Example Method)

The most powerful way to steer AI is to provide “shots” (examples) of success. Instead of describing a tone, show it.

Iteration: “Here are two cover letters I’ve written in the past that landed interviews. Analyze my writing style and tone, and use that style to draft a new letter for [Job X].” - Stage 3: Negative Constraints (Setting Boundaries)

Tell the AI what not to do. This is often more effective than telling it what to do.

Examples: “Do not use bullet points,” “Avoid buzzwords like ‘synergy’ or ‘comprehensive’,” or “Do not mention competitor Brand Y.” - Stage 4: Chain-of-Thought (The Logic Method)

For complex math or argumentative writing, force the AI to show its work. Add this phrase: “Before giving the final answer, think step-by-step and outline your reasoning.” This drastically reduces logic errors and hallucinations.

The Evolution Gallery: A Real-World Case Study

Let’s demonstrate how a prompt evolves from a “Draft” to a “Masterpiece” for a Marketing Manager needing a Campaign Strategy.

| Level | The Prompt Strategy | The Result (The “Why”) |

| Level 1 (The Draft) | “Write a marketing plan for a new eco-friendly water bottle.” | Generic. Received generic advice on social media and SEO. No usable strategy. |

| Level 2 (The Refinement) | “Act as a CMO. Create a plan for a $10k budget. Target Gen Z on TikTok. Use a bold, humorous tone.” | Better. More focused due to persona and context, but still lacks a unique “hook” or actionable timeline. |

| Level 3 (The Masterpiece) | “Act as a CMO. [Context: Budget $10k, Target Gen Z]. Use the ‘Problem-Agitate-Solve’ framework. Negative Constraint: No influencer marketing. Few-Shot: Use the bold tone of [Example Campaign A]. Output a 4-week calendar in a table.” | Professional. High-utility, ready-to-implement strategy that matches brand voice perfectly and adheres to budget constraints. |

Addressing the “Missing” Pieces: Professional Refinements

Even a Masterpiece prompt needs safeguards.

A. Handling Hallucinations (Human-in-the-Loop)

To safeguard critical work, use the Self-Critique Iteration. After the AI provides a complex response, prompt it with:

“Review your previous response for factual inconsistencies or logical leaps. Point out any areas where you lacked specific data to make a definitive claim.” This forces the model to “double-check” its own work.

B. Prompt Versioning & Governance (For Teams)

Don’t let “Masterpiece” prompts get lost in chat history.

- Library: Store successful prompts in a shared company Notion or Google Doc.

- Variables: Use brackets to standardize prompts for team use. (e.g., “Act as a [Role] to analyze [Project Name] using data set [X].”)

C. Troubleshooting the “Stuck” Prompt

Sometimes the AI gets stuck in a rut.

- The “Reset” Strategy: If the AI is looping on bad data, abandon the chat. Start a fresh window. Context windows get “cluttered” with previous bad attempts.

- Verb Swapping: If “Summarize” isn’t working, try “Distill,” “Condense,” or “Synthesize.” Different verbs trigger different pathways in the model.

The Advantages: Why Mastering This Skill Matters

- Time Compression: What used to take a human 10 hours now takes 1 hour of “Prompt Architecture” and 30 minutes of “Human Polish.”

- Consistent Quality: By iterating until you have a “Masterpiece Prompt,” you ensure that every subsequent output meets high standards regardless of who on your team uses it.

- Future-Proofing: As AI models evolve, the ability to “steer” them through iteration will remain a valuable skill, far more enduring than knowing how to use a specific tool interface.

Use Cases for Professionals and Students

- The Student (Literature Review): Start by asking for a summary of one paper. Iterate by asking the AI to compare its methodology to a second paper. Refine by asking it to identify specific “gaps in the research” across both papers to support your thesis.

- The Professional (Email Automation): Start with a basic follow-up template. Iterate by feeding it three examples of your previous emails so it learns your voice. Refine by adding a variable for each client.

The journey from a “Draft” to a “Masterpiece” is not about a single moment of inspiration; it is about a disciplined process of refinement. For college students, this method turns AI into a tutor that deepens understanding rather than a shortcut that bypasses it. For professionals, it turns AI into a force multiplier that elevates output quality and scale.

Stop looking for the “perfect prompt.” Start building the perfect process. The best results don’t come from the first click, they come from the fifth iteration.